本文实例讲述了Python爬虫实现爬取百度百科词条功能。分享给大家供大家参考,具体如下:

以下我写了一个爬取百度百科词条的实例。

爬虫主程序入口

from crawler_test.html_downloader import UrlDownLoaderfrom crawler_test.html_outer import HtmlOuterfrom crawler_test.html_parser import HtmlParserfrom crawler_test.url_manager import UrlManagerclass MainCrawler(): def __init__(self): self.urls = UrlManager() self.downloader = UrlDownLoader() self.parser = HtmlParser() self.outer = HtmlOuter() def start_craw(self, main_url): print('爬虫开始...') count = 1 self.urls.add_new_url(main_url) while self.urls.has_new_url(): try: new_url = self.urls.get_new_url() print('爬虫%d,%s' % (count, new_url)) html_cont = self.downloader.down_load(new_url) new_urls, new_data = self.parser.parse(new_url, html_cont) self.urls.add_new_urls(new_urls) self.outer.conllect_data(new_data) if count >= 10: break count += 1 except: print('爬虫失败一条') self.outer.output() print('爬虫结束。')if __name__ == '__main__': main_url = 'https://baike.baidu.com/item/Python/407313' mc = MainCrawler() mc.start_craw(main_url)

URL管理器

# URL管理器class UrlManager(): def __init__(self): self.new_urls = set() self.old_urls = set() def

add_new_url(self, url): if url is None: return elif url not in self.new_urls and url not in self.old_urls: self.new_urls.add(url) def add_new_urls(self, urls): if urls is None or len(urls) == 0: return else: for url in urls: self.add_new_url(url) def has_new_url(self): return len(self.new_urls) != 0 def get_new_url(self): new_url = self.new_urls.pop() self.old_urls.add(new_url) return new_url

网页下载器

from urllib import request# 网页下载器

class UrlDownLoader(): def down_load(self, url): if url is None: return None else: rt = request.Request(url=url, method='GET') with request.urlopen(rt) as rp: if rp.status != 200: return None else: return rp.read()

网页解析器

import refrom urllib import parsefrom bs4 import BeautifulSoup# 网页解析器,使用BeautifulSoupclass HtmlParser(): def _get_new_url(self, main_url, soup): new_urls = set()

child_urls = soup.find_all('a', href=re.compile(r'/item/(\%\w{2})+')) for child_url in child_urls: new_url = child_url['href'] full_url = parse.urljoin(main_url, new_url) new_urls.add(full_url) return new_urls def _get_new_data(self, main_url, soup): new_datas = {} new_datas['url'] = main_url new_datas['title'] = soup.find('dd', class_='lemmaWgt-lemmaTitle-title').find('h1').get_text() new_datas['content'] = soup.find('div', attrs={'label-module': 'lemmaSummary'}, class_='lemma-summary').get_text() return new_datas def parse(self, main_url, html_cont): if main_url is None or html_cont is None: return soup = BeautifulSoup(html_cont, 'lxml', from_encoding='utf-8') new_url = self._get_new_url(main_url, soup)

new_data = self._get_new_data(main_url, soup) return new_url, new_data

输出处理器

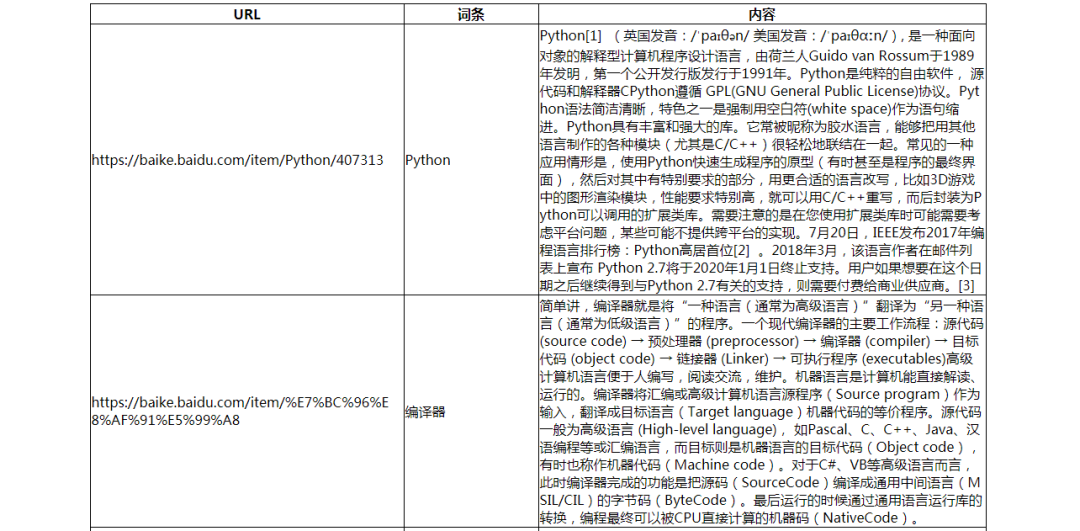

# 输出器class HtmlOuter(): def __init__(self): self.datas = [] def conllect_data(self, data): if data is None: return self.datas.append(data) return self.datas def output(self, file='output_html.html'): with open(file, 'w', encoding='utf-8') as fh: fh.write('') fh.write('') fh.write('') fh.write('爬虫数据结果') fh.write('') fh.write('') fh.write( '') fh.write('

') fh.write('| URL | ') fh.write('| 词条 | ') fh.write('| 内容 | ') fh.write('

') for data in self.datas: fh.write('') fh.write('| {0} | '.format(data['url'])) fh.write('| {0} | '.format(data['title'])) fh.write('| {0} | '.format(data['content'])) fh.write('

') fh.write('

') fh.write('') fh.write('')

效果(部分):

原文链接:https://www.cnblogs.com/wcwnina/p/8619084.html

文章转载:Python编程学习圈

(版权归原作者所有,侵删)

点击下方“阅读原文”查看更多